02 — The Story

Five scenes, told through scroll

The site is one long page. There's no clicking through anything — you scroll, and the camera moves with you. The story unfolds in five short scenes:

1. The Book. A quiet country, a place most people never visited.

2. A Girl Reading. The northern reaches of Gyeonggi, a child who waited.

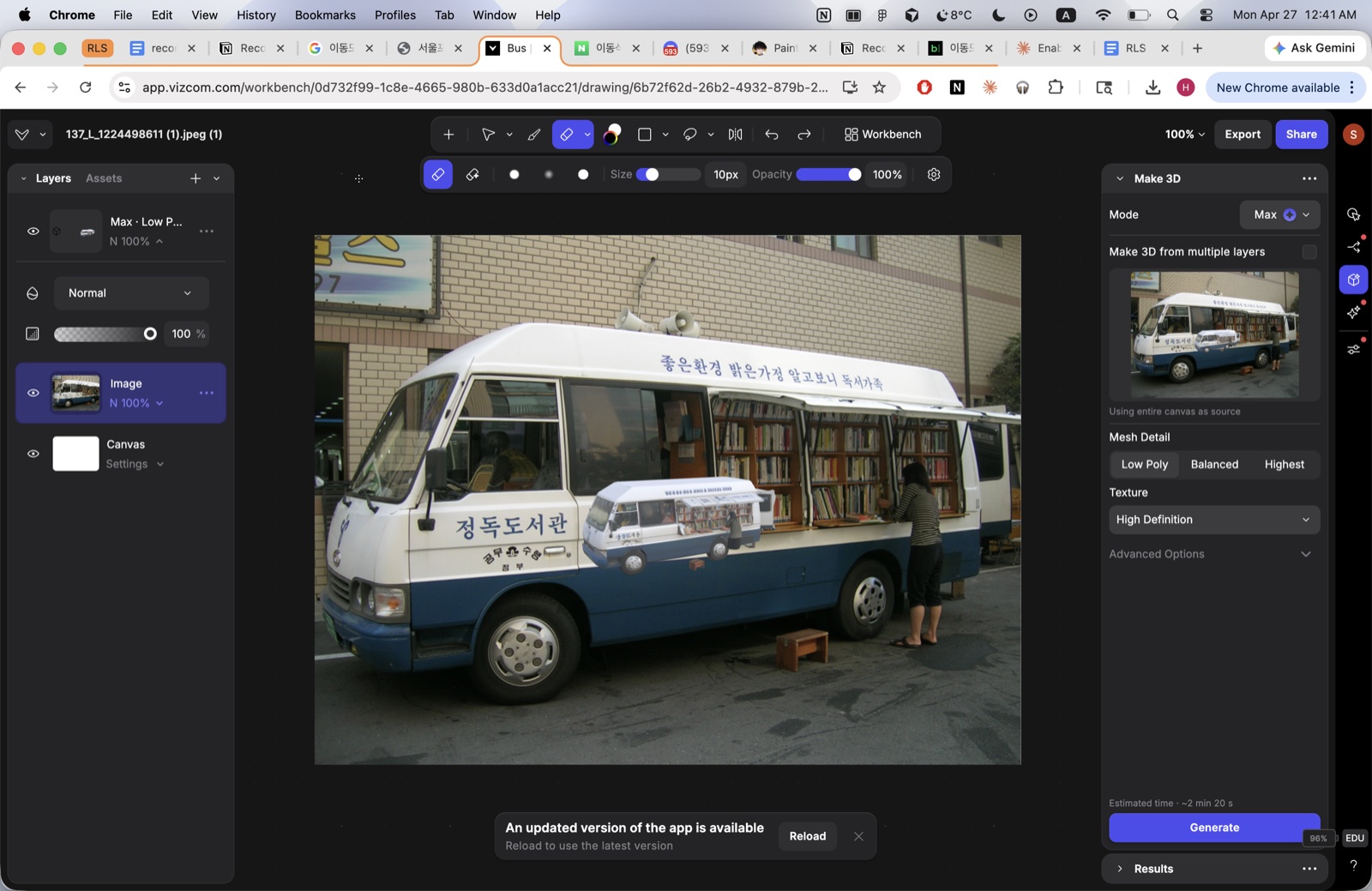

3. Thursday. The bus appears. The 3D camera animation begins.

4. Hope on Wheels. One bus, one librarian, and a chance to explore beyond the village.

5. Memory. Years later, in cities with grand libraries, still remembering the weeks I waited.

Scenes 1 and 2 are AI-generated paintings that slide up from below as you scroll. From scene 3 onward, the painterly 3D bus takes over — and the camera flies around it at exactly the rate you scroll.